Have you ever needed to understand a new project and started reading the tests only to find that you have no idea what the tests are doing? Writing great test documentation as you are writing tests will improve your tests and help you and others reading the tests later. We will first look at why test documentation is important both when writing tests and for future readers and then look at a framework that helps give some structure to your test documentation. Next, we will look at a showcase of the flake8-test-docs tool that automates test documentation checks to ensure your documentation is great! Finally, we briefly discuss how this framework would apply in more advanced cases, such as when you are using fixtures or parametrising tests.

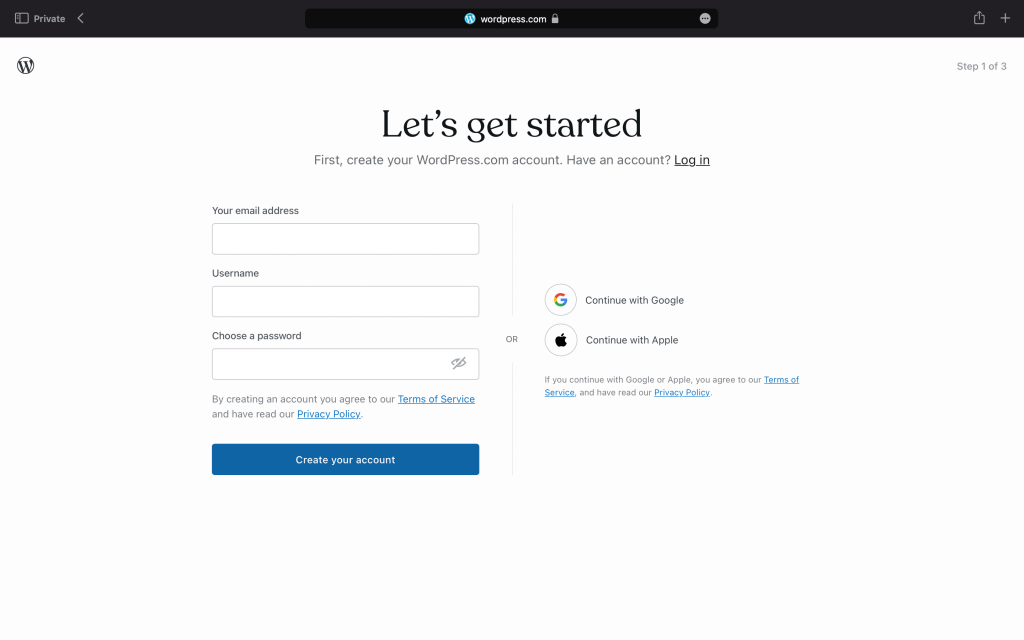

To aid us along the way, let’s consider an example. Let’s say we are writing a web API and we’re currently thinking about an API call the front end makes to create a new user:

Let’s start the discussion with why test documentation is important as you write your tests. Just like design is important when you are writing production code, writing a good test takes some thought. You are probably aware of test driven development (TDD), which is a technique where tests are written before production code. We won’t discuss TDD in detail here, although great documentation can help lift your TDD game as well. Writing test documentation before you write test code is a similar concept and comes with similar benefits as TDD. In particular, before you can write a test, you need to know what you are actually going to test. Expressing that in English will help you figure out what you need to write in code. What better way of expressing your tests in English than starting with the test documentation? This way documentation is not an afterthought to get you through the PR process. A good way to frame a test is to think about what you need before the test can be executed (the test setup), what you are going to do during the test (the execution) and what you need to check to ensure the test succeeded (the checks).

We will start with what you need before you can run the test (the test setup which may involve fixtures). This should be things like defining test data such as constants, setting up a database connection, creating an instance of a class you are about to test or anything else that you need to be able to run the function or piece of code under test. For the documentation, describe what you need for the test. In our example of an API to create a new user;

- We will need a running instance of our API

- We need to connect the API to a database (which may be mocked or could be an in-memory database)

- The database needs to have a few tables defined, such as the user table

- We will need some test data for the user to be created, such as a name, email address and password

The next section is the test execution. This can be as simple as just calling a function with the data created during the setup or may involve running a few functions, retrieving class attributes and or sending a HTTP request. It is good not to have the test execution to be too complex because that might make the test difficult to understand or you could accidentally introduce bugs in your tests. For the documentation, describe what the code being tested should do, such as, calling an API endpoint with the test data created during setup. In the case of creating a new user for our website, it would be sending a POST to the /user endpoint with the name, email address and password we defined during the test setup. It is better to define test data in the test setup to make the test execution as simple and focussed as possible. As a side benefit, this also makes any later test parametrisation easier as well.

The last part of a test is where you check that the execution went as you expected. It is also good to limit the complexity here although sometimes a few closely related checks can be performed in the same test. Some judgement is needed here, if the checks are excessively simple you may end up with very similar tests where the only difference are the checks. That is a sign that you can probably combine those tests into one. On the other hand, if you need a lot of complex logic for the checks, that could be a sign that it would be worth breaking up the test into a few separate tests. For the documentation, describe the final state you expect the system to be in. In the case of creating a new user for our website, we should probably check that the response was a 2XX series response and that the user data was added to the database. In this case we have two checks in the same test because they are closely related.

The final test documentation for creating a user might look like the following:

def test_create_user():

"""

given a running API instance connected to an

in-memory database with the user table

created and the name, email and password

for a user

when the user name, email and password is

sent to the /user endpoint as a POST

then a 2XX response is returned and the user

data is added to the user table.

"""

You can see that, writing down the test setup, execution and checks helped us figure out exactly what we need to do in the code of the test. This has also given us a head start on documentation and will make it easier for others to understand the tests as you go through, for example, a PR to land your change. This is a great segway to the next topic around test documentation also being important for readers of the code (which might be you a few months later).

The tests of a project should capture all the expected behaviours of the code. In theory, if something does not have an associated test, it should be safe to remove or alter the behaviour of the code. If your users rely on a behaviour not captured in a test, write some new tests with some great documentation! Having established that all the expected behaviours should have an associated test, tests are essentially documenting the requirements of the project. When you get user requirements to implement, does it help you if the requirements are logically laid out and are well documented? Making tests clear and well documented will similarly benefit future readers of and contributors to your project. For example, consider the following code that implements the test case we documented above and see if it is clear from the code alone what the test is expecting of the API:

def test_create_user(db):

user = {

"name": "David",

"email": "david@jdkandersson.com",

"password": "secure",

}

requests.post("localhost/user", data=user)

users = db.user.select()

assert len(users) == 1

assert users[0].name == user["name"]

assert users[0].email == user["email"]

assert users[0].password == hashed(user["password"])

You can guess what is going on although you won’t know that the user table needs to have been created. The test implies that the API needs to be running and connected to the database although it isn’t clear whether the database is mocked or we’re actually connecting to the production database. Additionally, the HTTP response code isn’t being checked, it is up to the reader to guess whether the response code check was intended to be included or is deliberately left out.

Now that we have established the benefits of test documentation, we can formalise the structure of the test documentation we have already established to provide some consistency across our tests. Specifically, we expect three sections explaining the test setup, execution and the checks. I have found structuring the docstrings of my test functions in the following way makes it easy for me to think through the usual stages of a test and also makes it easy to see if I have forgotten one of the sections:

def test_create_user():

"""

arrange: given a running API instance

connected to an in-memory database with

the user table created and the name,

email and password for a user

act: when the user name, email and password

is sent to the /user endpoint as a POST

assert: then a 2XX response is returned and

the user data is added to the user table.

"""

Giving each section a name makes it easy to jump between the different sections and also makes it clear to the reader when the test setup, execution and checks are being discussed. Indenting the lines that continue the description of a section one level deeper than the section names also makes it obvious that those lines continue the description of the section. The open source flake8-test-docs flake8 plugin automatically checks that each test function has a docstring and is formatted according to the above framework, which will help make adopting this test documentation framework easier and you can use it to automate checks in your PR process. The GitHub page linked above includes a getting started guide and a more detailed description of what the linting checks look for, how to configure which files and functions to check and how to customise the checks, such as using an alternative to the arrange/act/assert section names used by default. For a brief showcase, if the linter is run against the earlier example that was missing documentation, it will produce the warning:

$ flake8 test_source.py

test_source.py:1:1: TDO001 Docstring not defined on test function, more information: https://github.com/jdkandersson/flake8-test-docs#fix-tdo001

If you accidentally forget one of the sections, such as the assert section:

# test_source.py

def test_create_user():

"""

arrange: given a running API instance

connected to an in-memory database with

the user table created and the name,

email and password for a user

act: when the user name, email and password

is sent to the /user endpoint as a POST

"""

It will remind you to include that section:

$ flake8 test_source.py

test_source.py:2:5: TDO002 the docstring should include "assert" describing the test checks on line 7 of the docstring, more information: https://github.com/jdkandersson/flake8-test-docs#fix-tdo002

Finally, let’s consider some more advanced tests and how the arrange/act/assert framework fits in. Firstly, if I’m using pytest and fixtures, I usually include a description of the fixtures in the arrange part of the documentation, although more briefly for fixtures. I usually put a detailed description of the fixtures in the docstring of that fixture. The second advanced use case is parametrising tests. Using pytest, I usually generalise the docstring of a parametrised tests to be applicable for all the parameters and put the details of each case in the id parameter of a pyest.param.

Summarising what we have discussed, the tests of a project capture the user requirements of the project. Great documentation helps you write clear tests and makes it easier for readers of the project understand the requirements. Breaking the documentation into the test setup, execution and checks gives you a framework for thinking through your test and makes it easy to understand the test. The flake8-test-docs flake8 plugin automatically checks that all your test functions have a docstring and that the docstring follow the arrange/act/assert framework.

3 thoughts on “Writing Great Test Documentation”